By continuing, you accept Aulart’s Privacy Policy.

Aulart Originals

AI for Music Production

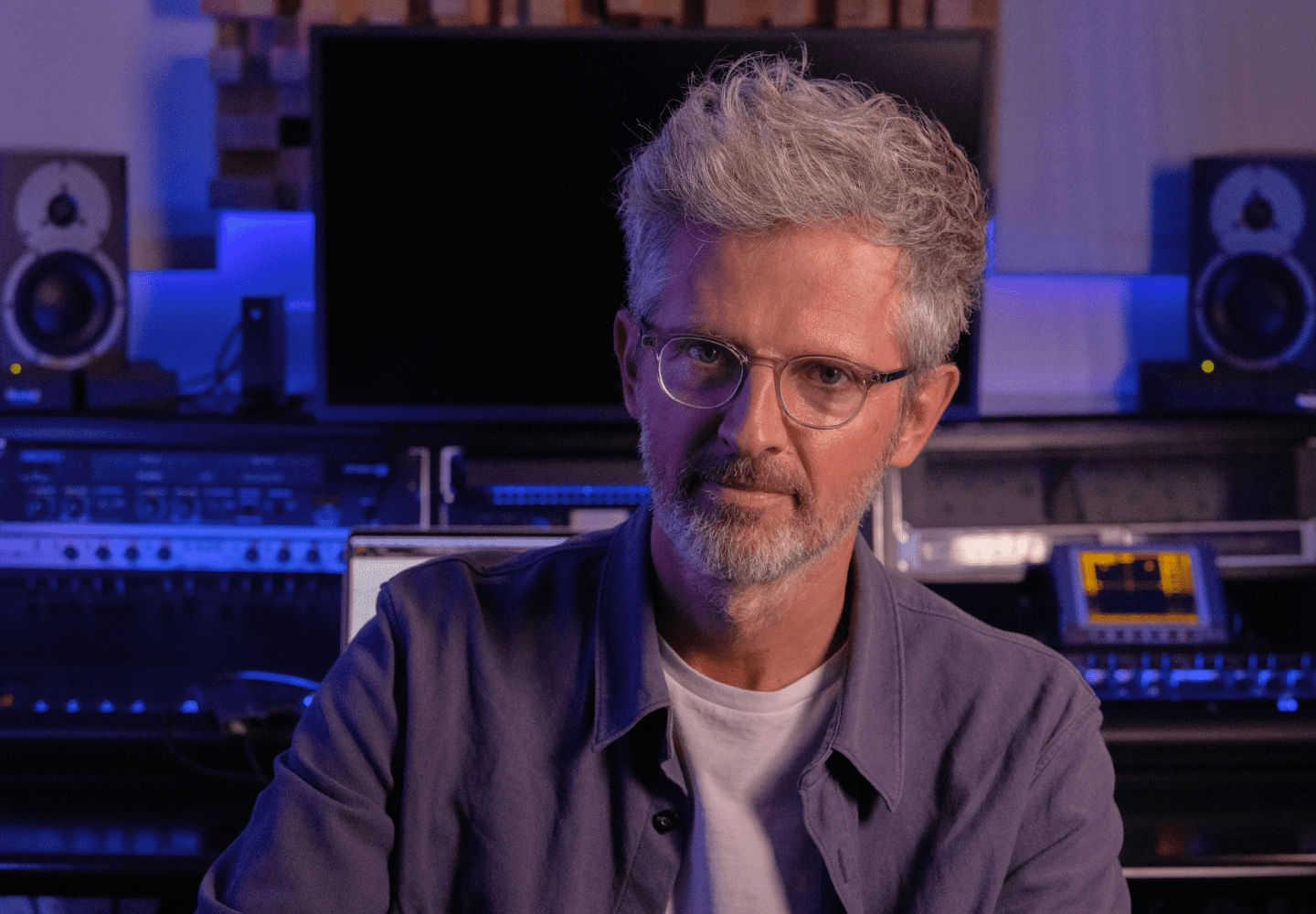

Skygge composed the first album ever made with AI. Discover his advice on utilizing AI tools for your music production, and start a new musical journey.

Leverage AI's transformative role in your music creation.

Access a Free Chapter

This masterclass is included in the Connect Plan membership, providing you with access to hundreds of chapters from masterclasses featuring Grammy-winning producers and international artists.

Leave your email, and you’ll be granted access to select a free chapter of your choice.

By continuing, you accept Aulart’s Privacy Policy.

What will I learn?

In this chapter, French music producer Benoît Carré shares his journey and insights into using AI for music production. He discusses his experience with Flow Machines, a technology developed by François Pachet team, which captures the style of musicians and generates songs. Carré explains how this technology has revolutionized his creative process, allowing him to explore new paths and enhance his own creativity. He also invites viewers to discover the interesting ways these AI tools can help in composing music, and provides concrete examples and demonstrations of using these tools in real-time song creation. This chapter offers valuable insights and skills for musicians interested in incorporating AI into their music production process.

The chapter showcases the Flow Machine system, which allows users to feed MIDI files and generate unique compositions. The professor demonstrates two examples of songs created using this technology, highlighting the unexpected and intriguing melodies that can be produced. Additionally, the chapter discusses the use of AI voice generators, such as the Alter Ego software, to create synthetic voices for singing. The professor shares anecdotes from his own journey, including collaborating with artists like Toma and incorporating jazz musicians into his compositions. Overall, this chapter provides valuable insights into the possibilities and techniques of using AI in music production.

Skygge introduces an exciting AI tool that allows users to transform their voice into various instruments such as violin, saxophone, and trumpet. This tool, called a notebook, serves as an interface developed by the researcher themselves. Viewers can learn how to install and import the model, record with their microphone, or import an audio file to generate music with different instruments. The chapter also showcases how the tool was used in a song, creating a dramatic and expressive mood with solos from the violin and saxophone. Overall, this chapter offers valuable insights and skills in utilizing AI for music production and composing.

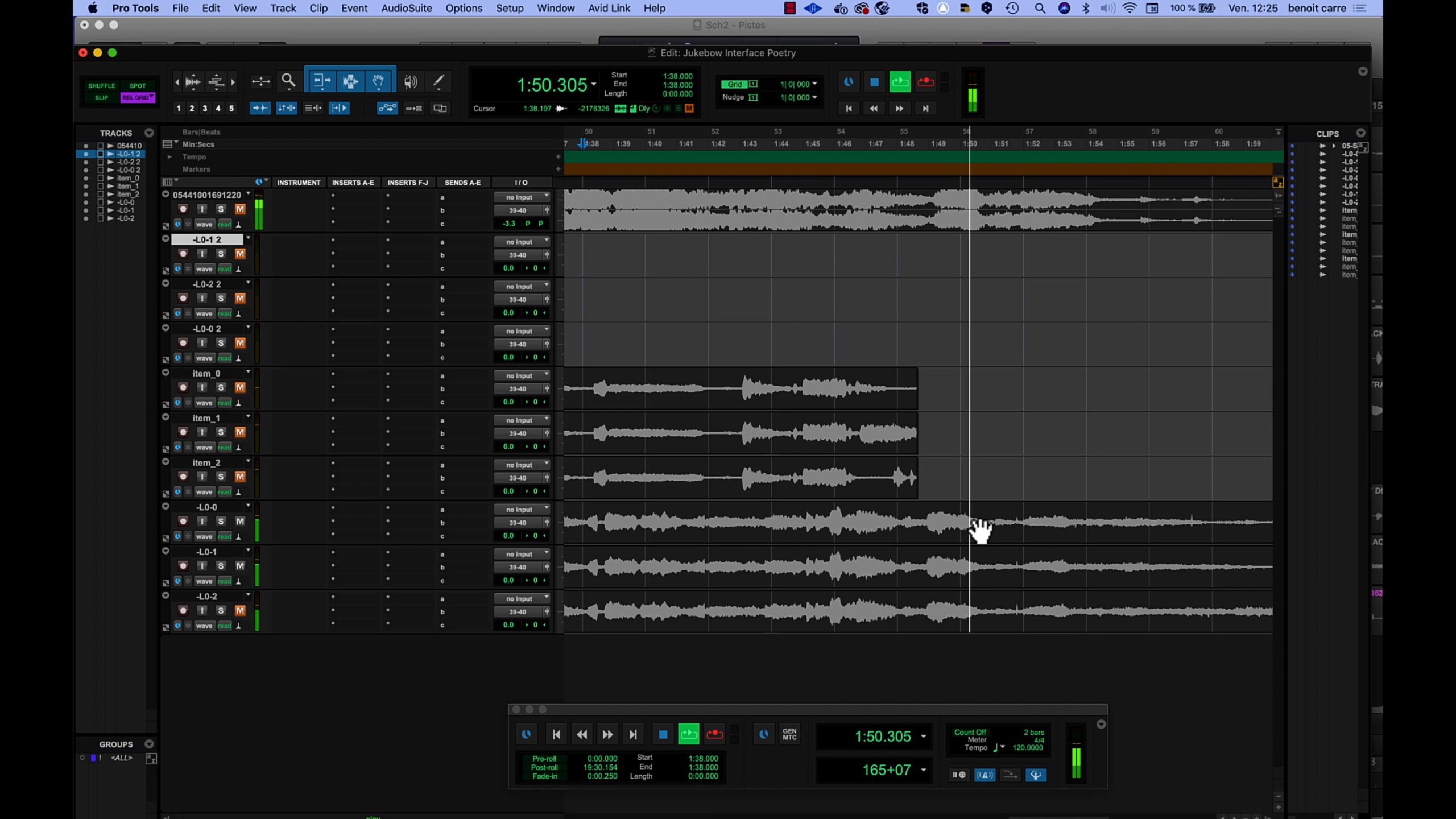

The tool, called Jukebox, is an open source and trained on 1 million songs using unsupervised neural networks. By feeding the system a 10-second extract of a song and setting constraints such as artist or genre, users can generate unique and unexpected musical compositions. The chapter includes examples of songs where Jukebox was used to continue the composition, showcasing the tool’s ability to create emotional and sensitive music. While the process can be time-consuming, the results are often inspiring and worth the wait. Viewers are encouraged to experiment with this technology and explore its potential in their own music production.

The instructor demonstrates how to use apps to manipulate and change the timbre of voices, allowing users to create interesting and unique sound combinations. Additionally, the chapter explores the process of training AI models to generate voices and transform them into different singers or styles. Overall, this chapter provides valuable insights and skills for viewers to experiment with AI in their music production journey.

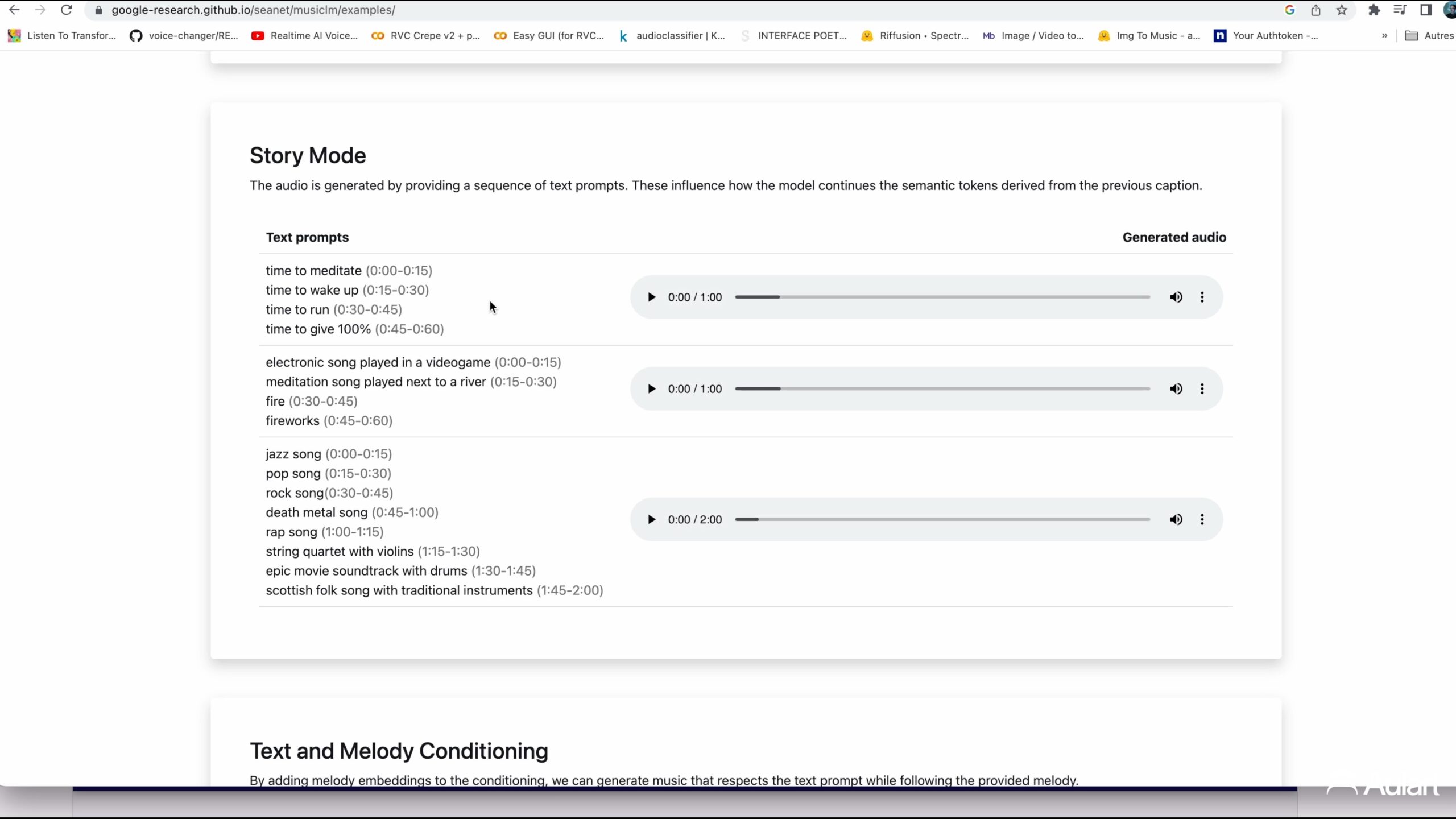

In this chapter of the music production course, Professor Skygge explores the use of AI in music production. He discusses various AI tools such as MusicLM developed by Google and its equivalent developed by Facebook Meta. While these tools allow users to describe what they want to hear and generate results, the interaction and control over the output are limited. However, Professor Skygge also introduces Mubert, which offers more precise control and better sound quality, making it suitable for creating stems like drum beats. Additionally, he showcases the refusion model, which allows users to experiment with different musical elements like guitar solos. Overall, this chapter provides valuable insights into the capabilities and limitations of AI for music production.

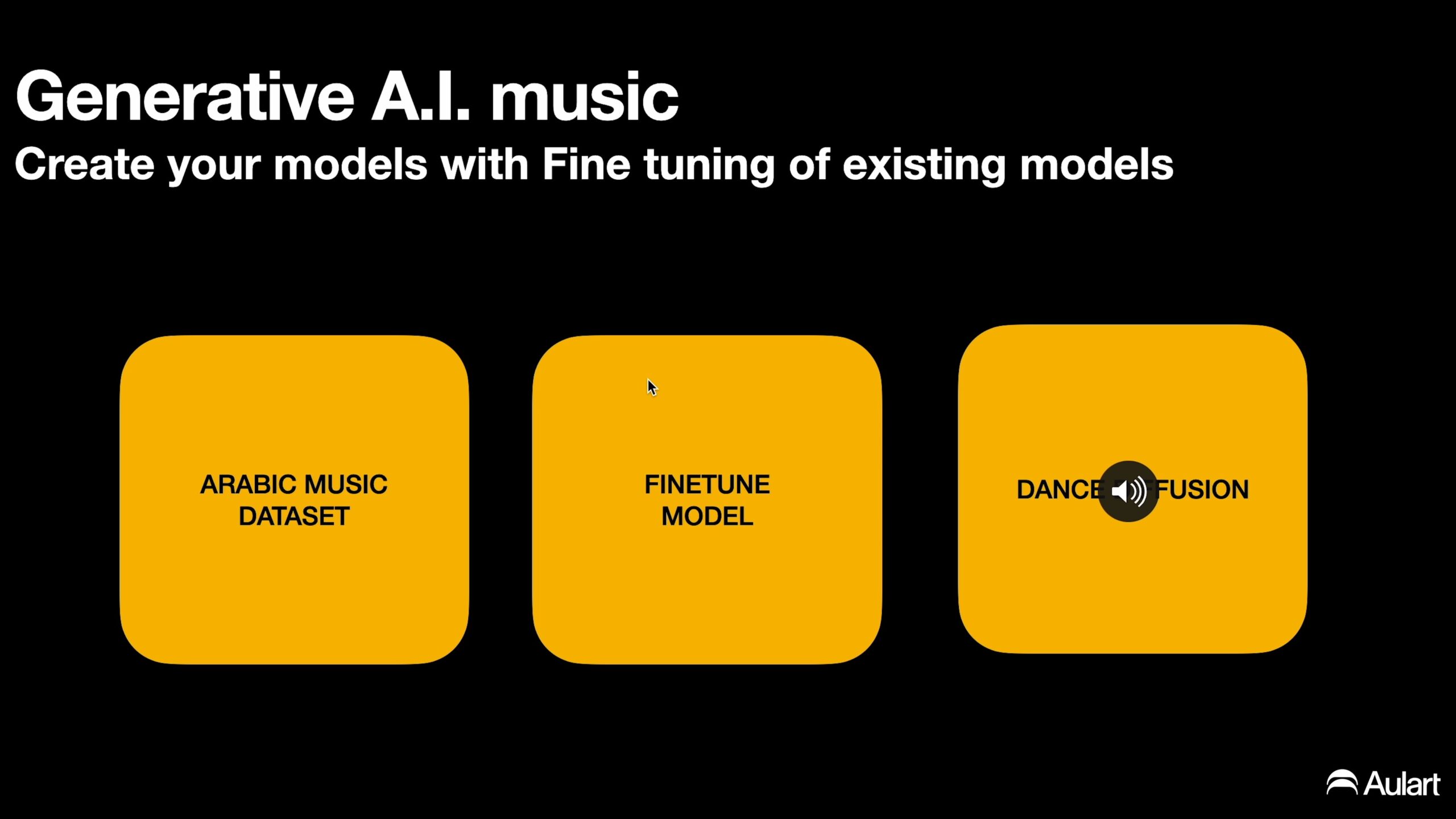

In this chapter, viewers gain valuable insights into using AI for music production. The chapter explores the concept of fine-tuning models to generate music by transforming it into noise and then recreating it. The professor shares examples of training a model with an Arabic music dataset and discusses the ease of creating your own model using existing ones. While the results may not be perfect, the chapter highlights the potential for musicians to create their own models and experiment with AI in music production. Additionally, viewers are introduced to Replicate.com, a platform where different models can be used and customized for musical AI generation. Overall, this chapter provides a fascinating exploration of AI’s role in music production and offers practical tools for musicians to experiment with.

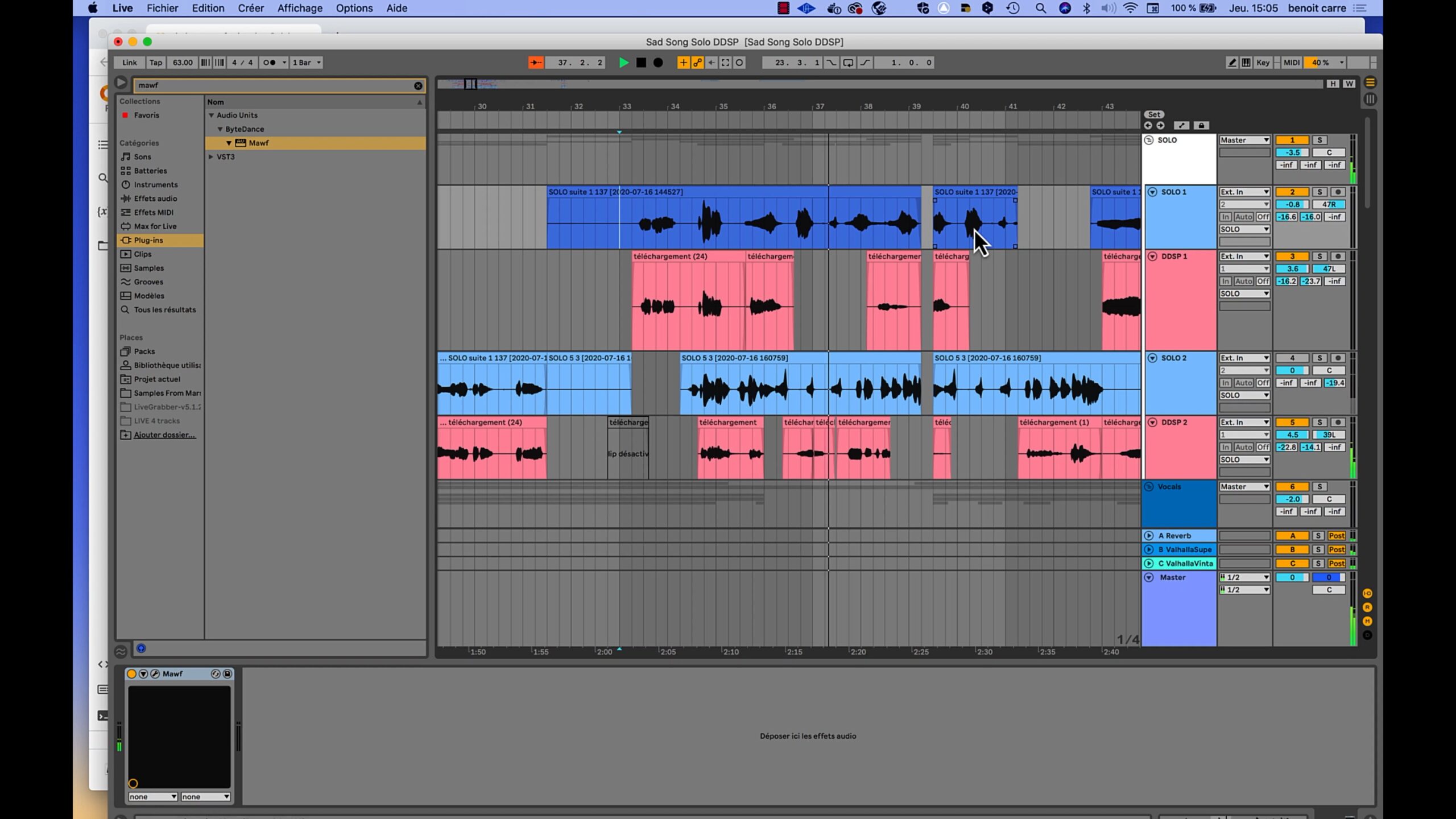

In this chapter of the A.I Intelligence in Music Production masterclass with Professor Skygge, viewers are introduced to exciting AI tools for music production. One such tool is Jamahook, a plugin that analyzes your session and suggests loops and stems tailored to your song. Another tool, Magenta Studio, allows you to import it into Ableton and generate 4-bar compositions and change the groove of your drums using MIDI. Professor Skygge also recommends joining the Water & Music Discord community for further insights and information. By being curious and exploring these AI music production tools, viewers can discover new and interesting ways to enhance their work.

Skygge

Benoit Carré a.k.a SKYGGE is at the vanguard of musicians exploring the creative potential of artificial intelligence (A.I.) Since 2015, he works alongside research teams, helps them develop A.I. prototypes for musicians, creating a bridge between innovation and music creation.With his debut album Hello World released in 2018, he became one of the first artists to produce pop music using A.I. technologies in the whole process of making music.His experience as a French pop songwriter and producer (“Voyage en Italie” Lilicub, Imany, Françoise Hardy, Maurane) led him to take the risk of composing differently, listening to the musical fragments generated by A.I. and integrating them into his songs for their unique beauty.

Make an investment in your artist career

- 8 Chapters

- Subtitles : Spanish, English, German, French & Portuguese

- 55 min

- English

- Video 4K

- AI Tools