Myth 1: Always cut EQ not boost

The Myth: “boosting EQ adds noise” and “boosts with digital EQs sound terrible” and also “boosting adds phase distortion”.

Why it’s wrong: This was the top tip 20 years ago from internet experts, but cutting and boosting are functionally the same in terms of sound, phase, and noise. If you cut or boost and match the gain and then test against each other you will notice they are equivalent.

Try a test for yourself: Create a track with audio playing and add a high shelf boost of +10DB at 1Khz . Now duplicate that track and switch the high shelf to a low shelf and take the gain on that to -10Db. Add +10Db of make-up gain so that it matches the other channel, now invert the phase on one of the two tracks to do a phase cancellation test. If you followed the instructions (and your EQ is of good quality) then your output will go silent. This is because the cut and the boost are exactly phase matched and there is no benefit to either one.

In analog systems each EQ band gain required an amp circuit. Cheap components could mean there was a possibility when boosting for each amp to add noise, but in the digital realm where signals are at least 32bit with a noise floor down around -96db and a theoretical dynamic range of 1,528 dB (!) You do not need to worry about adding noise with boost. Floating point data scales very well and without distortion or loss in modern mixing systems. Also, as we said: if you are matching gain at the output stage then any noise is equivalent in either cut or boost.

One reason why cutting (rather than boosting) can be a beneficial workflow is that adding gain can trick you into thinking it sounds better, in the same way that turning the channel up sounds better. Your ear can hear the sound more clearly if you’ve added +4 Db of boost, even if you’ve not got the EQ correct. So it’s logical that reducing problematic frequencies and then increasing the overall gain is a better workflow to avoid mistakes. But that doesn’t mean “never boost” nor does it mean “always cut”. It makes no sense if your guitars need a presence boost at 4 K and 5.5K for you to cut every other frequency.

With regard to phase distortion or phase shifting, boosting has the equivalent phase distortion as cutting… just inverted.

What you should do: Cut and boost as required so that it sounds right in the mix.

Myth 2: High pass everything except your kick and bass

The Myth: “get rid of unnecessary low frequencies to leave room for our kick and bass. If the sound is a mid range sound like a guitar, or a pad, or a piano then it doesn’t need all that low end clutter so use a 48 db high-pass to kill all the unwanted low frequencies on all channels other than the kick and bass tracks.”

Why it’s wrong: It’s true that high-passing to eliminate unnecessary frequencies is a good way to reclaim headroom so it seems logical that hi-pass filtering everything which is not a “bass instrument” would give you a better loudness.

In fact the “unnecessary” parts of the sounds which you are removing can be thought of as their anchor. Low room resonances, body resonances below the root harmonic.

Depending on your genre and song you may find that by removing the low end body from all mid-range instruments makes you mix sound sterile, thin, brittle. Many instruments rely on the lower end of the spectrum to give them body. If a sound has reverberance or resonance below what appears to be its fundamental harmonic then removing the body resonance will un-anchor the sound.

What you should do:

If you have frequency clashes the first thing to try is less complex chord voicings or to work on the arrangement. If those issues are as good as they can be then you should EQ, filter, and compress based on what the track needs. If a vocal track sounds great with a 48db high pass at 200Hz then by all means feel free to do so, but judge it based on the track.

If you determine that you want to reduce the low frequencies in a track then try a low shelf with a wide Q first.. If at a certain point you need to create more room then of course you can punch in a steep hi-pass at that point, but listen carefully. Don’t apply this strategy as a blanket template solution to all tracks at all times, instead use high-passing based on the situation’s requirements. Have the tool in your toolbox but don’t hit every track with it like it’s a hammer.

Myth 3: Linear Phase EQ is always preferable

The Myth: “Linear Phase EQ is better because it doesn’t mess up your sounds, it keeps all the phases intact and that’s why Mastering Engineers only use linear EQ, because it’s the best. Everyone serious about mixing should use linear-phase EQs to avoid phase distortion and transient smearing. “

The Reality: All EQs consist of filters which transform phase relationships whether to increase or decrease the volumes within the defined frequency ranges. Phase relationship manipulation means in the very simplest terms: “adding or subtracting a copy of the signal which is offset from the original”, and it’s those offsets which the two types of EQ aim to deal with.

The EQ that you will be most familiar with is called “Minimum Phase EQ” and this EQ aims to delay each wavelength by the smallest possible amount. The drawback to this strategy is: a low frequency requires a different duration offset than a high frequency does so in a Minimum Phase EQ the high and low signals are delayed by differing amounts. If a large amount of filtering is applied that means the phase difference between input and output from low to high frequency can increase to around 80 microseconds. (0.00008 seconds).

Linear Phase EQs aim to keep all the wavelengths (from bass to treble) delayed by the same amount. To do this the Linear Phase waits until the longest processing delay has elapsed then outputs the entire frequency range at the same time.

So, Minimum Phase tends to “colour” sound, in that it alters the phase relationships slightly from high to low, while Linear Phase maintains the relationships to maintain whatever flavour it already present. Both have pitfalls, Minimum Phase can add colouration and Linear Phase can add pre-ringing (as a consequence of the delay process)

What you should do: Sometimes it’s fine to have colouration, after all the sounds you hear are coloured by nature – and sometimes it’s OK to colour sound. Sometimes you want to maintain the colour you have already recorded (which is why Mastering Engineers often use Linear Phase).

But each type of EQ has a trade-off and it’s dependent on circumstances which is the preferable solution for each case.

Myth 4: You should never have to EQ any more than +/- 3db”

The Myth: “There’s something wrong with your recording or instrument sounds if you need to add more than 3db of EQ. A real engineer doesn’t use harsh EQ with a lot of gain or attenuation!”

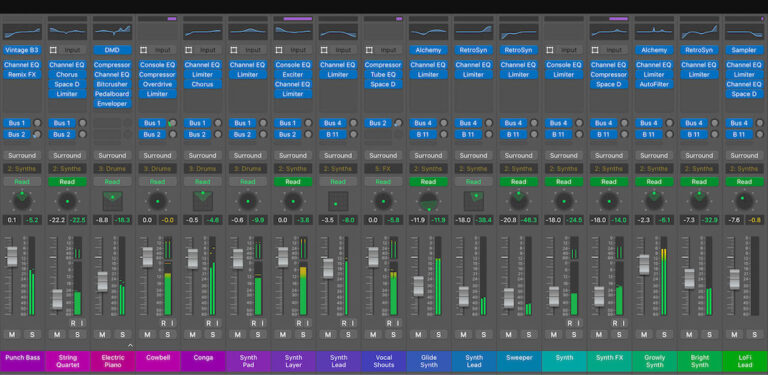

The reality: As you read in the previous tip, Equalisation can incur phase issues and sometimes with multi-mic drum setups beginners would get into a tangle. You can see that filters are complex to understand so in an effort to limit phase issues teachers would often advise students to avoid excessive EQ. This is prudent because it helps students to learn moderation when EQing and also it helps prevent getting into issues with harsh mixes. But if you take a look at the mix files of professional engineers you will often see extreme cuts and boosts. There are many videos and articles available online where you can examine the screens of well established engineers who are happily using more than 10Db of boost on a track. Again, it all depends.

Myth 5: “Always” and “Never” rules

The Myths:Never mix into compression. Never put reverb into a compressor. Always mix to -12db, don’t distort the inputs, …

The reality: Any advice which starts with the words “always” or “never” should be viewed with great caution. Try instead to replace these words with “usually”.

There are techniques which will make your mix sound as you want it, and there will be things that don’t take the song in the right direction. If the end product sounds good, then it does not matter you got there.

For example: mixing into compression on your busses is a technique used by many mix engineers to get a certain sound, the SSL buss compressor is a prized piece of equipment for a good reason. So mixing into compression is suddenly OK. Equally putting saturation across multiple channels might sound crazy but it’s perfectly allowable.

If it sounds right for the song then it is right. Some songs sound great when they go against all the rules complete with distorted drums set strangely low in the mix. Don’t be afraid to break the rules. Some songs should sounds dirty, some songs should have the drums in the far distance, some songs suit having the vocals sung into the worst possible microphone.

The backing vocal for the song Everlong by the Foo Fighers was sung down the telephone because the backing vocalist (Louise Post) was hundreds of miles away at the time. The mic used to record the incoming phonecall? A harmonica microphone with no top end at all (Astatc JT40). That is surely not the perfect vocal chain by the rule-book, but it’s perfect for the song.

I hope you enjoyed these myth-busting tips. Remember that a rule is a guide to help you, it’s a jumping off point.

50 Industry Music Production Tips You Must Know

50 Industry Music Production Tips You Must Know